Vision Transformer (ViT): Transformers for Computer Vision

The intersection of natural language processing and computer vision has led to one of the most exciting developments in artificial intelligence: the Vision Transformer (ViT). While transformers dominated text-based tasks for years, their application to images seemed challenging due to the fundamentally different nature of visual data. The Vision Transformer changed this narrative entirely, proving that the self-attention mechanism could work just as effectively for computer vision tasks.

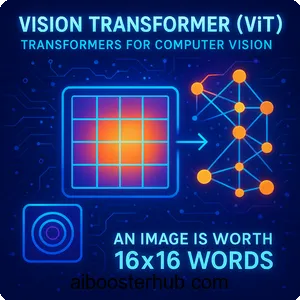

The key insight behind ViT can be summarized in a simple yet profound statement: “an image is worth 16×16 words.” This concept revolutionized how we approach image classification and other computer vision tasks, eliminating the need for convolutional layers that had been the backbone of vision models for decades.

Content

Toggle1. Understanding the vision transformer architecture

The vision transformer architecture represents a paradigm shift in how machines process visual information. Unlike traditional convolutional neural networks that process images through local receptive fields, vision transformers treat images as sequences of patches, similar to how text transformers process sequences of words.

The core principle: images as sequences

At its heart, the ViT model transforms the image classification problem into a sequence processing task. Instead of analyzing pixels through convolution operations, ViT divides an image into fixed-size patches and processes these patches as tokens. This approach draws a direct parallel to how transformers handle sentences in NLP, where each word becomes a token.

Consider an image of size \( 224 \times 224 \) pixels. The ViT model divides this into patches of size \( 16 \times 16 \), resulting in \( (224/16) \times (224/16) = 14 \times 14 = 196 \) patches. Each patch is then flattened and linearly projected into an embedding space, creating what we call patch embeddings.

Breaking down the architecture

The vision transformer architecture consists of several key components working in harmony:

Patch embedding layer: This initial layer performs the crucial transformation from image space to sequence space. Each patch goes through a linear projection that maps it to a D-dimensional embedding vector.

Position embeddings: Since self-attention mechanisms are permutation-invariant, we need to inject positional information. ViT uses learnable position embeddings that are added to patch embeddings, allowing the model to understand spatial relationships.

Transformer encoder: The core of the architecture consists of multiple transformer encoder layers, each containing multi-head self-attention and feed-forward networks with residual connections and layer normalization.

Classification head: Similar to BERT’s [CLS] token, ViT prepends a learnable class token to the sequence. The final state of this token is used for classification through a simple MLP head.

Let me illustrate this with a Python implementation:

import torch

import torch.nn as nn

class PatchEmbedding(nn.Module):

def __init__(self, img_size=224, patch_size=16, in_channels=3, embed_dim=768):

super().__init__()

self.img_size = img_size

self.patch_size = patch_size

self.n_patches = (img_size // patch_size) ** 2

# Linear projection of flattened patches

self.projection = nn.Conv2d(

in_channels,

embed_dim,

kernel_size=patch_size,

stride=patch_size

)

def forward(self, x):

# x shape: (batch_size, channels, height, width)

x = self.projection(x) # (batch_size, embed_dim, n_patches**0.5, n_patches**0.5)

x = x.flatten(2) # (batch_size, embed_dim, n_patches)

x = x.transpose(1, 2) # (batch_size, n_patches, embed_dim)

return x

class VisionTransformer(nn.Module):

def __init__(

self,

img_size=224,

patch_size=16,

in_channels=3,

num_classes=1000,

embed_dim=768,

depth=12,

num_heads=12,

mlp_ratio=4.0,

dropout=0.1

):

super().__init__()

# Patch embedding

self.patch_embed = PatchEmbedding(img_size, patch_size, in_channels, embed_dim)

num_patches = self.patch_embed.n_patches

# Class token

self.cls_token = nn.Parameter(torch.zeros(1, 1, embed_dim))

# Position embeddings

self.pos_embed = nn.Parameter(torch.zeros(1, num_patches + 1, embed_dim))

self.pos_drop = nn.Dropout(dropout)

# Transformer encoder blocks

self.blocks = nn.ModuleList([

TransformerBlock(embed_dim, num_heads, mlp_ratio, dropout)

for _ in range(depth)

])

# Classification head

self.norm = nn.LayerNorm(embed_dim)

self.head = nn.Linear(embed_dim, num_classes)

def forward(self, x):

batch_size = x.shape[0]

# Patch embedding

x = self.patch_embed(x)

# Add class token

cls_tokens = self.cls_token.expand(batch_size, -1, -1)

x = torch.cat((cls_tokens, x), dim=1)

# Add position embeddings

x = x + self.pos_embed

x = self.pos_drop(x)

# Apply transformer blocks

for block in self.blocks:

x = block(x)

# Classification

x = self.norm(x)

cls_output = x[:, 0]

return self.head(cls_output)

2. The mathematics of self-attention in vision transformers

Understanding the mathematical foundation of vision transformers is crucial for grasping why they work so effectively for computer vision. The self-attention mechanism allows each patch to attend to all other patches, creating a global understanding of the image.

Self-attention computation

The self-attention mechanism computes three matrices from the input: Query (Q), Key (K), and Value (V). For an input sequence \( X \in \mathbb{R}^{n \times d} \), where \( n \) is the number of patches and \( d \) is the embedding dimension:

$$ Q = XW_Q, \quad K = XW_K, \quad V = XW_V $$

where \( W_Q, W_K, W_V \in \mathbb{R}^{d \times d_k} \) are learned weight matrices.

The attention scores are computed using scaled dot-product attention:

$$ \text{Attention}(Q, K, V) = \text{softmax}\left(\frac{QK^T}{\sqrt{d_k}}\right)V $$

The scaling factor \( \sqrt{d_k} \) prevents the dot products from growing too large, which would push the softmax function into regions with extremely small gradients.

Multi-head attention

Vision transformers use multi-head attention, which allows the model to jointly attend to information from different representation subspaces. For \( h \) attention heads:

$$ \text{MultiHead}(Q, K, V) = \text{Concat}(\text{head}_1, …, \text{head}_h)W_O $$

where each head is computed as:

$$ \text{head}_i = \text{Attention}(QW_i^Q, KW_i^K, VW_i^V) $$

Here’s the implementation:

class MultiHeadAttention(nn.Module):

def __init__(self, embed_dim, num_heads, dropout=0.1):

super().__init__()

assert embed_dim % num_heads == 0

self.embed_dim = embed_dim

self.num_heads = num_heads

self.head_dim = embed_dim // num_heads

self.scale = self.head_dim ** -0.5

# Q, K, V projections

self.qkv = nn.Linear(embed_dim, embed_dim * 3)

self.attn_drop = nn.Dropout(dropout)

self.proj = nn.Linear(embed_dim, embed_dim)

self.proj_drop = nn.Dropout(dropout)

def forward(self, x):

batch_size, num_patches, embed_dim = x.shape

# Generate Q, K, V

qkv = self.qkv(x).reshape(batch_size, num_patches, 3, self.num_heads, self.head_dim)

qkv = qkv.permute(2, 0, 3, 1, 4)

q, k, v = qkv[0], qkv[1], qkv[2]

# Attention scores

attn = (q @ k.transpose(-2, -1)) * self.scale

attn = attn.softmax(dim=-1)

attn = self.attn_drop(attn)

# Apply attention to values

x = (attn @ v).transpose(1, 2).reshape(batch_size, num_patches, embed_dim)

x = self.proj(x)

x = self.proj_drop(x)

return x

class TransformerBlock(nn.Module):

def __init__(self, embed_dim, num_heads, mlp_ratio=4.0, dropout=0.1):

super().__init__()

self.norm1 = nn.LayerNorm(embed_dim)

self.attn = MultiHeadAttention(embed_dim, num_heads, dropout)

self.norm2 = nn.LayerNorm(embed_dim)

# MLP block

mlp_hidden_dim = int(embed_dim * mlp_ratio)

self.mlp = nn.Sequential(

nn.Linear(embed_dim, mlp_hidden_dim),

nn.GELU(),

nn.Dropout(dropout),

nn.Linear(mlp_hidden_dim, embed_dim),

nn.Dropout(dropout)

)

def forward(self, x):

# Attention with residual connection

x = x + self.attn(self.norm1(x))

# MLP with residual connection

x = x + self.mlp(self.norm2(x))

return x

3. Patch embeddings: the bridge between images and sequences

The concept of patch embeddings is what makes the vision transformer possible. This technique transforms the two-dimensional structure of images into one-dimensional sequences that transformers can process effectively.

Why 16×16 patches?

The phrase “an image is worth 16×16 words” encapsulates a fundamental design choice in ViT. While this patch size isn’t mandatory, it represents an optimal balance between computational efficiency and information preservation. Smaller patches would create longer sequences and increase computational cost quadratically due to self-attention, while larger patches might lose important fine-grained details.

Let’s examine what happens mathematically when we create patch embeddings. For an input image \( x \in \mathbb{R}^{H \times W \times C} \), where \( H \) and \( W \) are height and width, and \( C \) is the number of channels:

- The image is divided into patches of size \( P \times P \)

- The number of patches becomes \( N = \frac{HW}{P^2} \)

- Each patch is flattened to a vector of dimension \( P^2 \cdot C \)

- A linear projection maps each flattened patch to dimension \( D \)

The resulting sequence has shape \( N \times D \), ready for transformer processing.

Positional encoding strategies

Unlike text, where word order is inherently sequential, images have two-dimensional spatial structure. Vision transformers must encode this 2D positional information. There are several approaches:

Learnable 1D position embeddings: The standard ViT approach treats the sequence of patches as 1D and learns position embeddings during training. Despite seeming simplistic, this works remarkably well.

2D position embeddings: Some variants explicitly encode row and column information, creating separate embeddings for horizontal and vertical positions.

Sinusoidal position encodings: Similar to the original Transformer paper, some implementations use fixed sinusoidal functions.

Here’s a comparison implementation:

class PositionalEmbedding(nn.Module):

def __init__(self, num_patches, embed_dim, embedding_type='learnable'):

super().__init__()

self.embedding_type = embedding_type

if embedding_type == 'learnable':

self.pos_embed = nn.Parameter(torch.zeros(1, num_patches + 1, embed_dim))

elif embedding_type == '2d':

# For 2D positional embeddings

self.grid_size = int(num_patches ** 0.5)

self.pos_embed_h = nn.Parameter(torch.zeros(1, self.grid_size, embed_dim // 2))

self.pos_embed_w = nn.Parameter(torch.zeros(1, self.grid_size, embed_dim // 2))

elif embedding_type == 'sinusoidal':

self.pos_embed = self._get_sinusoidal_encoding(num_patches + 1, embed_dim)

def _get_sinusoidal_encoding(self, num_positions, embed_dim):

position = torch.arange(num_positions).unsqueeze(1)

div_term = torch.exp(torch.arange(0, embed_dim, 2) * -(torch.log(torch.tensor(10000.0)) / embed_dim))

pos_embed = torch.zeros(1, num_positions, embed_dim)

pos_embed[0, :, 0::2] = torch.sin(position * div_term)

pos_embed[0, :, 1::2] = torch.cos(position * div_term)

return nn.Parameter(pos_embed, requires_grad=False)

def forward(self, x):

if self.embedding_type == 'learnable' or self.embedding_type == 'sinusoidal':

return x + self.pos_embed

elif self.embedding_type == '2d':

batch_size, num_patches, embed_dim = x.shape

# Reshape to 2D grid

cls_token = x[:, 0:1, :]

patches = x[:, 1:, :].reshape(batch_size, self.grid_size, self.grid_size, embed_dim)

# Add 2D positional embeddings

pos_h = self.pos_embed_h.unsqueeze(2).expand(-1, -1, self.grid_size, -1)

pos_w = self.pos_embed_w.unsqueeze(1).expand(-1, self.grid_size, -1, -1)

pos_2d = torch.cat([pos_h, pos_w], dim=-1)

patches = patches + pos_2d

patches = patches.reshape(batch_size, num_patches - 1, embed_dim)

return torch.cat([cls_token, patches], dim=1)

4. Training and fine-tuning vision transformers

The training process for vision transformers differs significantly from traditional convolutional networks. Understanding these differences is crucial for successfully applying ViT to real-world problems.

Pre-training strategies

Vision transformers typically require large-scale pre-training to achieve competitive performance. The original ViT model was pre-trained on massive datasets like ImageNet-21k or JFT-300M. This pre-training phase teaches the model general visual representations.

The pre-training objective is straightforward image classification with cross-entropy loss:

$$ \mathcal{L} = -\sum_{i=1}^{C} y_i \log(\hat{y}_i) $$

where \( C \) is the number of classes, \( y_i \) is the true label, and \( \hat{y}_i \) is the predicted probability.

Data augmentation importance

Vision transformers are particularly sensitive to the amount and quality of training data. Unlike CNNs, which have inductive biases (translation equivariance through convolutions), transformers must learn these properties from data. Strong data augmentation becomes essential:

import torchvision.transforms as transforms

def get_training_augmentation():

"""

Comprehensive augmentation strategy for training vision transformers

"""

return transforms.Compose([

transforms.RandomResizedCrop(224, scale=(0.05, 1.0)),

transforms.RandomHorizontalFlip(),

transforms.ColorJitter(brightness=0.4, contrast=0.4, saturation=0.4, hue=0.1),

transforms.RandomRotation(15),

transforms.RandomAffine(degrees=0, translate=(0.1, 0.1)),

transforms.ToTensor(),

transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225]),

transforms.RandomErasing(p=0.25)

])

def get_validation_augmentation():

"""

Simple augmentation for validation/inference

"""

return transforms.Compose([

transforms.Resize(256),

transforms.CenterCrop(224),

transforms.ToTensor(),

transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])

])

Fine-tuning for downstream tasks

After pre-training, vision transformers can be fine-tuned for specific tasks. The fine-tuning process typically involves:

- Loading pre-trained weights: Initialize the model with weights learned on a large dataset

- Adjusting the classification head: Replace the final layer to match the target task’s number of classes

- Using a lower learning rate: Fine-tuning requires careful learning rate scheduling to avoid catastrophic forgetting

Here’s a complete training pipeline:

import torch.optim as optim

from torch.utils.data import DataLoader

from torchvision.datasets import ImageFolder

def train_vit(model, train_loader, val_loader, num_epochs=100, device='cuda'):

"""

Complete training loop for vision transformer

"""

model = model.to(device)

# Optimizer with weight decay (important for transformers)

optimizer = optim.AdamW(model.parameters(), lr=3e-4, weight_decay=0.3)

# Cosine annealing scheduler

scheduler = optim.lr_scheduler.CosineAnnealingLR(optimizer, T_max=num_epochs)

# Loss function

criterion = nn.CrossEntropyLoss(label_smoothing=0.1)

best_val_acc = 0.0

for epoch in range(num_epochs):

# Training phase

model.train()

train_loss = 0.0

train_correct = 0

train_total = 0

for batch_idx, (images, labels) in enumerate(train_loader):

images, labels = images.to(device), labels.to(device)

# Forward pass

outputs = model(images)

loss = criterion(outputs, labels)

# Backward pass

optimizer.zero_grad()

loss.backward()

# Gradient clipping

torch.nn.utils.clip_grad_norm_(model.parameters(), max_norm=1.0)

optimizer.step()

# Statistics

train_loss += loss.item()

_, predicted = outputs.max(1)

train_total += labels.size(0)

train_correct += predicted.eq(labels).sum().item()

train_acc = 100. * train_correct / train_total

# Validation phase

model.eval()

val_loss = 0.0

val_correct = 0

val_total = 0

with torch.no_grad():

for images, labels in val_loader:

images, labels = images.to(device), labels.to(device)

outputs = model(images)

loss = criterion(outputs, labels)

val_loss += loss.item()

_, predicted = outputs.max(1)

val_total += labels.size(0)

val_correct += predicted.eq(labels).sum().item()

val_acc = 100. * val_correct / val_total

# Learning rate scheduling

scheduler.step()

print(f'Epoch {epoch+1}/{num_epochs}')

print(f'Train Loss: {train_loss/len(train_loader):.4f}, Train Acc: {train_acc:.2f}%')

print(f'Val Loss: {val_loss/len(val_loader):.4f}, Val Acc: {val_acc:.2f}%')

# Save best model

if val_acc > best_val_acc:

best_val_acc = val_acc

torch.save(model.state_dict(), 'best_vit_model.pth')

return model

# Example usage

def main():

# Create model

model = VisionTransformer(

img_size=224,

patch_size=16,

num_classes=10,

embed_dim=768,

depth=12,

num_heads=12

)

# Prepare datasets

train_dataset = ImageFolder('path/to/train', transform=get_training_augmentation())

val_dataset = ImageFolder('path/to/val', transform=get_validation_augmentation())

train_loader = DataLoader(train_dataset, batch_size=32, shuffle=True, num_workers=4)

val_loader = DataLoader(val_dataset, batch_size=32, shuffle=False, num_workers=4)

# Train model

trained_model = train_vit(model, train_loader, val_loader, num_epochs=100)

5. Vision transformer variants and improvements

Since the introduction of the original ViT model, researchers have developed numerous variants addressing different aspects of performance, efficiency, and applicability. Understanding these variants helps in selecting the right architecture for specific applications.

Hierarchical vision transformers

One limitation of the original ViT is that it processes all patches at a single scale. Hierarchical variants like Swin Transformer introduce multi-scale feature hierarchies similar to CNNs:

- Shifted window attention: Instead of global attention across all patches, attention is computed within local windows that shift between layers

- Patch merging: Progressively reduces spatial resolution while increasing feature dimensions

- Computational efficiency: Reduces complexity from \( O(n^2) \) to \( O(n) \) where \( n \) is the number of patches

Hybrid approaches

Combining the strengths of convolutional neural networks and transformers has proven effective:

Convolutional stem: Replace patch embeddings with a few convolutional layers to extract low-level features before applying transformer blocks. This provides better inductive bias for smaller datasets.

class ConvStem(nn.Module):

def __init__(self, in_channels=3, embed_dim=768):

super().__init__()

self.conv_layers = nn.Sequential(

nn.Conv2d(in_channels, embed_dim//8, kernel_size=3, stride=2, padding=1),

nn.BatchNorm2d(embed_dim//8),

nn.ReLU(inplace=True),

nn.Conv2d(embed_dim//8, embed_dim//4, kernel_size=3, stride=2, padding=1),

nn.BatchNorm2d(embed_dim//4),

nn.ReLU(inplace=True),

nn.Conv2d(embed_dim//4, embed_dim//2, kernel_size=3, stride=2, padding=1),

nn.BatchNorm2d(embed_dim//2),

nn.ReLU(inplace=True),

nn.Conv2d(embed_dim//2, embed_dim, kernel_size=3, stride=2, padding=1),

)

def forward(self, x):

x = self.conv_layers(x)

# Reshape to sequence format

batch_size = x.shape[0]

x = x.flatten(2).transpose(1, 2)

return x

Efficient vision transformers

To make vision transformers more practical for resource-constrained environments:

DeiT (Data-efficient image Transformers): Introduces distillation tokens and knowledge distillation to train effectively on smaller datasets like ImageNet-1K without massive pre-training.

MobileViT: Combines lightweight convolutions with transformers for mobile deployment, achieving a balance between accuracy and computational cost.

Cross-attention mechanisms

For tasks beyond classification, such as object detection and segmentation, specialized attention mechanisms have been developed:

class CrossAttention(nn.Module):

def __init__(self, query_dim, context_dim, num_heads=8, dropout=0.1):

super().__init__()

self.num_heads = num_heads

self.head_dim = query_dim // num_heads

self.scale = self.head_dim ** -0.5

self.q_proj = nn.Linear(query_dim, query_dim)

self.k_proj = nn.Linear(context_dim, query_dim)

self.v_proj = nn.Linear(context_dim, query_dim)

self.out_proj = nn.Linear(query_dim, query_dim)

self.dropout = nn.Dropout(dropout)

def forward(self, query, context):

batch_size = query.shape[0]

# Project query, key, value

q = self.q_proj(query).reshape(batch_size, -1, self.num_heads, self.head_dim).transpose(1, 2)

k = self.k_proj(context).reshape(batch_size, -1, self.num_heads, self.head_dim).transpose(1, 2)

v = self.v_proj(context).reshape(batch_size, -1, self.num_heads, self.head_dim).transpose(1, 2)

# Attention

attn = (q @ k.transpose(-2, -1)) * self.scale

attn = attn.softmax(dim=-1)

attn = self.dropout(attn)

# Apply attention to values

out = (attn @ v).transpose(1, 2).reshape(batch_size, -1, query_dim)

out = self.out_proj(out)

return out

6. Practical applications and use cases

Vision transformers have demonstrated remarkable versatility across various computer vision tasks. Their ability to capture long-range dependencies makes them particularly effective for complex visual understanding problems.

Image classification at scale

The primary application of vision transformers remains image classification. When pre-trained on large datasets, ViT models achieve state-of-the-art results on standard benchmarks. For practical deployment:

def inference_with_vit(model, image_path, transform, class_names):

"""

Perform inference on a single image

"""

from PIL import Image

# Load and preprocess image

image = Image.open(image_path).convert('RGB')

image_tensor = transform(image).unsqueeze(0)

# Set model to evaluation mode

model.eval()

with torch.no_grad():

outputs = model(image_tensor)

probabilities = torch.softmax(outputs, dim=1)

top5_prob, top5_idx = torch.topk(probabilities, 5)

# Display results

print(f"\nTop 5 predictions for {image_path}:")

for i in range(5):

print(f"{class_names[top5_idx[0][i]]}: {top5_prob[0][i].item()*100:.2f}%")

return top5_idx[0][0].item()

Object detection with DETR

Detection Transformer (DETR) combines vision transformers with transformer decoders for end-to-end object detection, eliminating the need for hand-crafted components like anchor boxes:

- Treats object detection as a set prediction problem

- Uses bipartite matching between predicted and ground truth objects

- Processes the entire image through a vision transformer backbone

- Decoder attends to the encoded image features to produce object queries

Semantic segmentation

Vision transformers excel at dense prediction tasks like semantic segmentation. Their global receptive field from the first layer enables better understanding of object boundaries and context:

class SegmentationHead(nn.Module):

def __init__(self, embed_dim, num_classes, img_size=224, patch_size=16):

super().__init__()

self.img_size = img_size

self.patch_size = patch_size

self.num_patches = (img_size // patch_size) ** 2

# Upsampling layers

self.decoder = nn.Sequential(

nn.Conv2d(embed_dim, embed_dim//2, kernel_size=3, padding=1),

nn.BatchNorm2d(embed_dim//2),

nn.ReLU(inplace=True),

nn.Upsample(scale_factor=2, mode='bilinear', align_corners=True),

nn.Conv2d(embed_dim//2, embed_dim//4, kernel_size=3, padding=1),

nn.BatchNorm2d(embed_dim//4),

nn.ReLU(inplace=True),

nn.Upsample(scale_factor=2, mode='bilinear', align_corners=True),

nn.Conv2d(embed_dim//4, num_classes, kernel_size=1)

)

def forward(self, x):

# Remove class token

x = x[:, 1:, :]

# Reshape to 2D feature map

batch_size = x.shape[0]

h = w = int(self.num_patches ** 0.5)

x = x.transpose(1, 2).reshape(batch_size, -1, h, w)

# Upsample to original image size

x = self.decoder(x)

x = nn.functional.interpolate(x, size=(self.img_size, self.img_size), mode='bilinear', align_corners=True)

return x

Video understanding

Extending vision transformers to video involves adding temporal modeling capabilities. This can be done by:

- Treating video as a sequence of frame patches

- Using temporal position embeddings

- Applying attention across both spatial and temporal dimensions

Medical imaging

Vision transformers have shown promising results in medical imaging tasks where understanding global context is crucial:

- X-ray and CT scan analysis

- Pathology image classification

- Retinal disease detection

- MRI segmentation

The self-attention mechanism helps identify subtle patterns and relationships across the entire medical image, which is often critical for accurate diagnosis.

7. Advantages, limitations, and future directions

Understanding the trade-offs of vision transformers helps in making informed decisions about when to use them versus traditional approaches.

Key advantages

Global receptive field: Unlike CNNs where receptive fields grow gradually through layers, ViT has a global view from the first layer. This enables capturing long-range dependencies essential for understanding complex scenes.

Scalability: Vision transformers scale better with data and model size. Larger ViT models consistently improve performance when trained on more data, following similar scaling laws as in NLP.

Flexibility: The same architecture can be adapted to various vision tasks with minimal modifications. This unified approach simplifies research and deployment pipelines.

Interpretability: Attention maps provide insights into which image regions the model focuses on, offering better understanding of model decisions compared to convolutional filters.

Current limitations

Data hunger: Vision transformers require substantial amounts of training data to perform well. Without large-scale pre-training, they often underperform CNNs on smaller datasets due to lack of inductive biases.

Computational cost: The quadratic complexity of self-attention with respect to sequence length makes ViT expensive for high-resolution images. Processing a \( 512 \times 512 \) image with \( 16 \times 16 \) patches creates 1,024 tokens, leading to attention computations of size \( 1024 \times 1024 \).

Memory requirements: Storing attention matrices and intermediate activations demands significant memory, limiting batch sizes and making deployment on edge devices challenging.

Limited inductive bias: While flexibility is an advantage, the lack of built-in assumptions about image structure (like translation equivariance) means transformers must learn these properties from data, requiring more training samples.

Here’s a practical example showing computational considerations:

def estimate_vit_complexity(img_size, patch_size, embed_dim, num_heads, num_layers):

"""

Estimate computational complexity and memory usage of ViT

"""

num_patches = (img_size // patch_size) ** 2

# FLOPs calculation (simplified)

# Patch embedding

patch_embed_flops = img_size * img_size * 3 * embed_dim

# Self-attention per layer

attention_flops_per_layer = 4 * num_patches * embed_dim * embed_dim

# MLP per layer (assuming mlp_ratio=4)

mlp_flops_per_layer = 2 * num_patches * embed_dim * (4 * embed_dim)

# Total for all layers

total_flops = patch_embed_flops + num_layers * (attention_flops_per_layer + mlp_flops_per_layer)

# Memory estimation (bytes)

# Model parameters

param_memory = (

embed_dim * 3 * patch_size * patch_size + # Patch embedding

num_layers * (

4 * embed_dim * embed_dim + # QKV projections and output

2 * embed_dim * 4 * embed_dim # MLP

)

) * 4 # 4 bytes per float32

# Activation memory (for one image)

activation_memory = (

num_patches * embed_dim + # Patch embeddings

num_layers * num_patches * embed_dim * 2 # Intermediate activations

) * 4

print(f"Configuration: {img_size}x{img_size} image, {patch_size}x{patch_size} patches")

print(f"Number of patches: {num_patches}")

print(f"Total FLOPs: {total_flops / 1e9:.2f} GFLOPs")

print(f"Parameter memory: {param_memory / 1e6:.2f} MB")

print(f"Activation memory (per image): {activation_memory / 1e6:.2f} MB")

return total_flops, param_memory, activation_memory

# Example comparison

print("ViT-Base configuration:")

estimate_vit_complexity(224, 16, 768, 12, 12)

print("\nViT-Large configuration:")

estimate_vit_complexity(224, 16, 1024, 16, 24)

print("\nHigh-resolution ViT-Base:")

estimate_vit_complexity(384, 16, 768, 12, 12)

Future research directions

The field of vision transformers continues to evolve rapidly, with several promising directions:

Efficient architectures: Developing variants that reduce computational complexity while maintaining performance. This includes sparse attention mechanisms, linear attention approximations, and hybrid architectures that strategically combine convolutions and self-attention.

Self-supervised learning: Creating better pre-training objectives that don’t require labeled data. Masked image modeling (similar to BERT’s masked language modeling) has shown promise, allowing models to learn representations from unlabeled images.

Multimodal integration: Combining vision transformers with language models for tasks requiring both visual and textual understanding, such as image captioning, visual question answering, and text-to-image generation.

Dynamic architectures: Implementing adaptive computation where different image regions receive different amounts of processing based on complexity, similar to how humans focus attention on salient regions.

Theoretical understanding: Deepening our understanding of why vision transformers work, what they learn in different layers, and how to design better training procedures based on theoretical insights.

Here’s an example of a simple masked image modeling implementation:

class MaskedImageModeling(nn.Module):

def __init__(self, vit_model, mask_ratio=0.75):

super().__init__()

self.vit = vit_model

self.mask_ratio = mask_ratio

self.decoder = nn.Linear(vit_model.embed_dim, vit_model.patch_embed.patch_size ** 2 * 3)

def random_masking(self, x, mask_ratio):

"""

Randomly mask patches for self-supervised learning

"""

batch_size, num_patches, embed_dim = x.shape

num_keep = int(num_patches * (1 - mask_ratio))

# Random shuffle

noise = torch.rand(batch_size, num_patches, device=x.device)

ids_shuffle = torch.argsort(noise, dim=1)

ids_restore = torch.argsort(ids_shuffle, dim=1)

# Keep subset

ids_keep = ids_shuffle[:, :num_keep]

x_masked = torch.gather(x, dim=1, index=ids_keep.unsqueeze(-1).repeat(1, 1, embed_dim))

# Generate binary mask

mask = torch.ones([batch_size, num_patches], device=x.device)

mask[:, :num_keep] = 0

mask = torch.gather(mask, dim=1, index=ids_restore)

return x_masked, mask, ids_restore

def forward(self, x):

# Encode patches

patches = self.vit.patch_embed(x)

patches = patches + self.vit.pos_embed[:, 1:, :]

# Random masking

patches_masked, mask, ids_restore = self.random_masking(patches, self.mask_ratio)

# Add class token

cls_token = self.vit.cls_token + self.vit.pos_embed[:, :1, :]

cls_tokens = cls_token.expand(patches_masked.shape[0], -1, -1)

x = torch.cat([cls_tokens, patches_masked], dim=1)

# Apply transformer blocks

for block in self.vit.blocks:

x = block(x)

x = self.vit.norm(x)

# Remove class token

x = x[:, 1:, :]

# Decode to reconstruct pixels

x = self.decoder(x)

return x, mask, ids_restore

def masked_image_modeling_loss(pred, target, mask):

"""

Compute loss only on masked patches

"""

loss = (pred - target) ** 2

loss = loss.mean(dim=-1) # Mean per patch

loss = (loss * mask).sum() / mask.sum() # Mean over masked patches

return loss

8. Knowledge Check

Quiz 1: The Core ViT Paradigm

Question: How does the concept “an image is worth 16×16 words” represent the fundamental paradigm shift introduced by the Vision Transformer (ViT) for processing images?

Answer: This concept captures ViT’s revolutionary approach. Instead of treating images as pixel grids, ViT divides them into fixed-size patches. These patches function like words in NLP transformers. Consequently, ViT transforms image classification into a sequence processing task. This allows self-attention mechanisms to work directly on visual data.

Quiz 2: ViT Architecture Components

Question: What are the three primary components found within each layer of a Vision Transformer’s Transformer Encoder?

Answer: Each Transformer Encoder layer contains three key components. First, multi-head self-attention enables patches to capture global relationships. Second, feed-forward networks process each patch representation independently. Finally, residual connections and layer normalization stabilize training. Together, these components improve overall performance.

Quiz 3: Image-to-Sequence Transformation

Question: Describe the process a Vision Transformer uses to convert a standard 2D image into a 1D sequence of tokens that the Transformer Encoder can process.

Answer: ViT converts images through three steps. First, it divides the image into non-overlapping patches (typically 16×16 pixels). Next, it flattens each 2D patch into a one-dimensional vector. Finally, it applies a trainable linear projection to each vector. This creates D-dimensional embeddings, producing the final token sequence.

Quiz 4: The Role of Positional Embeddings

Question: The self-attention mechanism is permutation-invariant, meaning it treats input tokens as an unordered set. Why are positional embeddings essential for a Vision Transformer to process images effectively?

Answer: Positional embeddings inject crucial spatial information into patch embeddings. Without them, the model cannot understand patch positions or arrangements. These learnable embeddings enable ViT to recognize spatial relationships between patches. Therefore, they become essential for interpreting image structure correctly.

Quiz 5: The Self-Attention Mechanism

Question: In the self-attention mechanism, what are the three matrices computed from the input sequence, and how are they used in the scaled dot-product attention formula?

Answer: Self-attention computes three learned matrices: Query (Q), Key (K), and Value (V). The formula softmax((QK^T)/sqrt(d_k))V defines their interaction. First, Q and K compute attention scores through dot products. Then, scaling and softmax create attention weights. Finally, these weights produce a weighted sum of V vectors. Each resulting vector contains patch information weighted by relevance to other patches.

Quiz 6: Training and Data Requirements

Question: Why are Vision Transformers often described as “data hungry,” and why might they underperform compared to Convolutional Neural Networks (CNNs) when trained on smaller datasets?

Answer: ViTs lack CNNs’ built-in inductive biases like translation equivariance. Consequently, they must learn visual properties from scratch. This requires massive pre-training datasets. On smaller datasets, ViTs cannot learn these properties effectively. Therefore, they often underperform compared to CNNs in low-data scenarios.

Quiz 7: Architectural Improvements in Variants

Question: The original ViT model has a quadratic computational complexity with respect to the number of image patches. How do hierarchical variants like the Swin Transformer address this limitation?

Answer: Swin Transformer replaces global self-attention with local attention. It computes attention within non-overlapping local windows. Furthermore, it shifts these windows between layers. This restricts calculations to smaller regions. As a result, computational complexity drops from O(n²) to O(n). This makes processing significantly more efficient.

Quiz 8: Advanced Applications

Question: How does the Detection Transformer (DETR) model adapt the transformer architecture for the task of object detection, and what traditional component does it eliminate?

Answer: DETR treats object detection as a direct set prediction problem. It uses a ViT-like backbone to encode images. Then, a transformer decoder produces object queries from this representation. This end-to-end approach eliminates hand-crafted components. Most notably, it removes the need for anchor boxes used in traditional detectors.

Quiz 9: Key Advantages of ViT

Question: What is a key advantage of the Vision Transformer’s self-attention mechanism compared to the local receptive fields used in traditional Convolutional Neural Networks (CNNs)?

Answer: Self-attention provides a global receptive field from the first layer. This allows immediate capture of long-range dependencies. The model can recognize relationships between distant patches across entire images. In contrast, CNNs must gradually build larger receptive fields through successive layers. Therefore, ViT processes global context more efficiently.

Quiz 10: Core Limitations of ViT

Question: What is the primary computational limitation of the standard Vision Transformer architecture when applied to high-resolution images?

Answer: Self-attention has quadratic complexity relative to sequence length. As image resolution increases, the number of patches grows. This causes computational and memory costs to increase quadratically. Consequently, processing high-resolution images becomes very expensive. This represents ViT’s primary computational bottleneck.