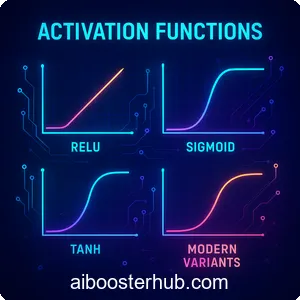

Activation Functions: ReLU, Sigmoid, Tanh, and Modern Variants

Activation functions are the mathematical gates that determine whether a neuron in a neural network should fire or remain dormant. Without these nonlinear functions, even the deepest neural networks would collapse into simple linear models, unable to capture the complex patterns that make modern AI so powerful. Understanding activation functions is fundamental to building, training, and optimizing neural networks for real-world applications.

In this comprehensive guide, we’ll explore the most important activation functions in deep learning, from classical sigmoid and tanh to the revolutionary ReLU and its modern variants like Leaky ReLU, GELU, and Swish. Whether you’re building your first neural network or optimizing a production model, choosing the right activation function can dramatically impact your model’s performance.

Content

Toggle1. What are activation functions and why do they matter?

An activation function is a mathematical operation applied to the output of each neuron in a neural network. It takes the weighted sum of inputs and transforms it into an output signal that gets passed to the next layer. This transformation introduces the critical element of nonlinearity into neural networks.

The role of nonlinearity in neural networks

Without activation functions, a neural network would simply be a series of linear transformations. No matter how many layers you stack, the combination of linear operations remains linear. This means your network could only learn linear relationships, like fitting a straight line to data.

Nonlinearity allows neural networks to approximate any continuous function, a property known as universal approximation. This capability enables networks to learn complex patterns like recognizing faces, understanding language, or playing strategic games. Consider a simple example: classifying whether a point lies inside a circle. This task requires a nonlinear decision boundary, which is impossible to achieve without nonlinear activation functions.

How activation functions work in the forward pass

During forward propagation, each neuron computes a weighted sum of its inputs plus a bias term:

$$z = w_1 x_1 + w_2 x_2 + \dots + w_n x_n + b$$

The activation function then transforms this value:

$$a = f(z)$$

Where \( f \) is the activation function and \( a \) is the activated output. This activated output becomes the input for neurons in the next layer, creating a cascade of nonlinear transformations throughout the network.

Impact on gradient flow and training dynamics

Activation functions don’t just affect forward propagation—they critically influence how gradients flow backward during training. When we use backpropagation to update weights, gradients must flow through activation functions. If an activation function has very small derivatives (gradients), the gradient can vanish as it propagates through deep networks, making learning extremely slow or even impossible.

Consider this Python example showing how different activation functions transform the same input:

import numpy as np

import matplotlib.pyplot as plt

def sigmoid(x):

return 1 / (1 + np.exp(-x))

def tanh(x):

return np.tanh(x)

def relu(x):

return np.maximum(0, x)

# Generate input values

x = np.linspace(-5, 5, 100)

# Apply activation functions

y_sigmoid = sigmoid(x)

y_tanh = tanh(x)

y_relu = relu(x)

# Visualize

plt.figure(figsize=(12, 4))

plt.plot(x, y_sigmoid, label='Sigmoid', linewidth=2)

plt.plot(x, y_tanh, label='Tanh', linewidth=2)

plt.plot(x, y_relu, label='ReLU', linewidth=2)

plt.grid(True, alpha=0.3)

plt.legend()

plt.xlabel('Input (z)')

plt.ylabel('Output (a)')

plt.title('Comparing Activation Functions')

plt.show()2. Classical activation functions: Sigmoid and tanh

Before ReLU revolutionized deep learning, sigmoid and tanh were the dominant activation functions. Understanding these classical functions provides important context for appreciating modern alternatives.

Sigmoid activation function

The sigmoid function, also called the logistic function, maps any real-valued number to a value between 0 and 1:

$$\sigma(z) = \frac{1}{1 + e^{-z}}$$

Its derivative, crucial for backpropagation, is elegantly related to the function itself:

$$\sigma'(z) = \sigma(z)\big(1 – \sigma(z)\big)$$

Sigmoid functions were historically popular because their output can be interpreted as probabilities. For example, in binary classification, the final layer often uses sigmoid to produce a probability between 0 and 1.

Here’s a practical implementation:

import numpy as np

class SigmoidActivation:

def forward(self, z):

"""Forward pass through sigmoid"""

self.output = 1 / (1 + np.exp(-np.clip(z, -500, 500)))

return self.output

def backward(self, dL_da):

"""Backward pass - compute gradient"""

dL_dz = dL_da * self.output * (1 - self.output)

return dL_dz

# Example usage

sigmoid = SigmoidActivation()

z = np.array([-2, -1, 0, 1, 2])

a = sigmoid.forward(z)

print(f"Input: {z}")

print(f"Output: {a}")

# Output: [0.119 0.269 0.5 0.731 0.881]Limitations of sigmoid:

- Vanishing gradients: For very large or small inputs, the gradient approaches zero, causing slow learning in deep networks

- Not zero-centered: Outputs are always positive, which can cause zigzagging during gradient descent

- Computationally expensive: The exponential operation is slower than simpler alternatives

Tanh activation function

The hyperbolic tangent (tanh) function maps inputs to values between -1 and 1:

$$\tanh(z) = \frac{e^{z} – e^{-z}}{e^{z} + e^{-z}} = \frac{2}{1 + e^{-2z}} – 1$$

Its derivative is:

$$ \tanh'(z) = 1 – \tanh^2(z) $$

Tanh is essentially a scaled and shifted version of sigmoid. Its zero-centered output makes it superior to sigmoid for hidden layers, as it doesn’t bias the gradients in one direction.

class TanhActivation:

def forward(self, z):

"""Forward pass through tanh"""

self.output = np.tanh(z)

return self.output

def backward(self, dL_da):

"""Backward pass - compute gradient"""

dL_dz = dL_da * (1 - self.output ** 2)

return dL_dz

# Example usage

tanh = TanhActivation()

z = np.array([-2, -1, 0, 1, 2])

a = tanh.forward(z)

print(f"Input: {z}")

print(f"Output: {a}")

# Output: [-0.964 -0.762 0. 0.762 0.964]Advantages over sigmoid:

- Zero-centered outputs

- Stronger gradients (derivative ranges from 0 to 1, compared to sigmoid’s 0 to 0.25)

Persistent limitations:

- Still suffers from vanishing gradients

- Computationally expensive

3. The ReLU revolution in deep learning

The Rectified Linear Unit (ReLU) transformed deep learning by introducing a remarkably simple yet powerful activation function:

$$ \text{ReLU}(z) = \max(0, z) =

\begin{cases}

z, & \text{if } z > 0 \\[6pt]

0, & \text{if } z \leq 0

\end{cases} $$

Its derivative is equally straightforward:

$$ \text{ReLU}'(z) =

\begin{cases}

1, & \text{if } z > 0 \\[6pt]

0, & \text{if } z \leq 0

\end{cases} $$

Why ReLU became the default choice

ReLU’s dominance stems from several key advantages:

Computational efficiency: ReLU involves simple thresholding and no exponential operations, making it 6-10x faster than sigmoid or tanh.

Sparse activation: In any given forward pass, roughly 50% of neurons output zero, creating sparse representations that are biologically plausible and computationally efficient.

Reduced vanishing gradients: For positive inputs, the gradient is constant at 1, allowing signals to flow through many layers without diminishing.

Better empirical performance: Deep networks with ReLU consistently train faster and achieve better results than those using sigmoid or tanh.

Here’s a complete implementation with gradient checking:

import numpy as np

class ReLUActivation:

def forward(self, z):

"""Forward pass through ReLU"""

self.z = z

self.output = np.maximum(0, z)

return self.output

def backward(self, dL_da):

"""Backward pass - compute gradient"""

dL_dz = dL_da * (self.z > 0).astype(float)

return dL_dz

# Demonstration with a simple network layer

np.random.seed(42)

X = np.random.randn(3, 4) # 3 samples, 4 features

W = np.random.randn(4, 5) # weights

b = np.random.randn(5) # bias

# Forward pass

z = X @ W + b

relu = ReLUActivation()

a = relu.forward(z)

print("Pre-activation values (z):")

print(z)

print("\nPost-activation values (ReLU(z)):")

print(a)

print(f"\nSparsity: {np.mean(a == 0) * 100:.1f}% of activations are zero")The dying ReLU problem

Despite its strengths, ReLU has a critical flaw: the “dying ReLU” problem. When a neuron’s weights are updated such that its weighted input is always negative, the neuron outputs zero for all inputs. With a zero gradient, the neuron never recovers—it’s effectively dead.

This can happen when:

- Learning rates are too high, causing large weight updates

- There’s an unfortunate initialization

- There are significant data shifts

In practice, you might find that 10-40% of ReLU neurons in a trained network are dead. While this isn’t always problematic (it can be a form of feature selection), excessive dead neurons reduce model capacity.

def analyze_dead_neurons(activations):

"""Analyze what percentage of neurons never activate"""

dead_neurons = np.all(activations == 0, axis=0)

dead_percentage = np.mean(dead_neurons) * 100

print(f"Dead neurons: {dead_percentage:.1f}%")

return dead_percentage

# Simulate activations across 1000 samples, 100 neurons

activations = np.random.randn(1000, 100)

relu = ReLUActivation()

relu_activations = relu.forward(activations)

analyze_dead_neurons(relu_activations)4. Modern ReLU variants: Leaky ReLU, PReLU, and ELU

To address ReLU’s limitations, researchers developed several variants that maintain its benefits while fixing specific issues.

Leaky ReLU

Leaky ReLU introduces a small slope for negative values, preventing neurons from dying completely:

$$ \text{LeakyReLU}(z) =

\begin{cases}

z, & \text{if } z > 0 \\[6pt]

\alpha z, & \text{if } z \leq 0

\end{cases} $$

Where \( \alpha \) is typically 0.01 or 0.1. This small negative slope ensures gradients always flow, even for negative inputs.

class LeakyReLUActivation:

def __init__(self, alpha=0.01):

self.alpha = alpha

def forward(self, z):

"""Forward pass through Leaky ReLU"""

self.z = z

self.output = np.where(z > 0, z, self.alpha * z)

return self.output

def backward(self, dL_da):

"""Backward pass - compute gradient"""

dL_dz = dL_da * np.where(self.z > 0, 1, self.alpha)

return dL_dz

# Compare ReLU and Leaky ReLU

z = np.array([-2, -1, 0, 1, 2])

relu = ReLUActivation()

leaky_relu = LeakyReLUActivation(alpha=0.1)

print(f"Input: {z}")

print(f"ReLU: {relu.forward(z)}")

print(f"Leaky ReLU: {leaky_relu.forward(z)}")

# Leaky ReLU outputs: [-0.2 -0.1 0. 1. 2. ]Parametric ReLU (PReLU)

PReLU takes Leaky ReLU one step further by making \( \alpha \) a learnable parameter:

$$ \text{PReLU}(z) =

\begin{cases}

z, & \text{if } z > 0 \\[6pt]

\alpha_i z, & \text{if } z \leq 0

\end{cases} $$

Where \( \alpha_i \) is learned during training, potentially with a different value for each channel. This allows the network to adaptively determine the best negative slope.

class PReLUActivation:

def __init__(self, num_parameters=1):

# Initialize learnable alpha parameters

self.alpha = np.ones(num_parameters) * 0.25

def forward(self, z):

"""Forward pass through PReLU"""

self.z = z

self.output = np.where(z > 0, z, self.alpha * z)

return self.output

def backward(self, dL_da):

"""Backward pass - compute gradient w.r.t. input and alpha"""

dL_dz = dL_da * np.where(self.z > 0, 1, self.alpha)

# Gradient w.r.t. alpha (for parameter update)

dL_dalpha = np.sum(dL_da * np.where(self.z > 0, 0, self.z), axis=0)

return dL_dz, dL_dalpha

def update_parameters(self, dL_dalpha, learning_rate=0.01):

"""Update the learnable alpha parameter"""

self.alpha -= learning_rate * dL_dalphaExponential Linear Unit (ELU)

ELU uses an exponential function for negative values, creating smooth gradients:

$$ \text{ELU}(z) =

\begin{cases}

z, & \text{if } z > 0 \\[6pt]

\alpha (e^{z} – 1), & \text{if } z \leq 0

\end{cases} $$

With derivative:

$$ \text{ELU}'(z) =

\begin{cases}

1, & \text{if } z > 0 \\[6pt]

\alpha e^{z} = \text{ELU}(z) + \alpha, & \text{if } z \leq 0

\end{cases} $$

ELU’s advantages:

- Pushes mean activations closer to zero, accelerating learning

- Smooth gradient everywhere, reducing optimization difficulties

- Can produce negative outputs, making representations more robust

class ELUActivation:

def __init__(self, alpha=1.0):

self.alpha = alpha

def forward(self, z):

"""Forward pass through ELU"""

self.z = z

self.output = np.where(z > 0, z, self.alpha * (np.exp(z) - 1))

return self.output

def backward(self, dL_da):

"""Backward pass - compute gradient"""

dL_dz = dL_da * np.where(self.z > 0, 1, self.output + self.alpha)

return dL_dz

# Comparison

z = np.linspace(-3, 3, 100)

elu = ELUActivation(alpha=1.0)

leaky_relu = LeakyReLUActivation(alpha=0.1)

plt.figure(figsize=(10, 6))

plt.plot(z, relu.forward(z), label='ReLU', linewidth=2)

plt.plot(z, leaky_relu.forward(z), label='Leaky ReLU', linewidth=2)

plt.plot(z, elu.forward(z), label='ELU', linewidth=2)

plt.grid(True, alpha=0.3)

plt.legend()

plt.xlabel('Input (z)')

plt.ylabel('Output')

plt.title('ReLU variants comparison')

plt.show()5. Smooth activation functions: GELU and Swish

Recent research has shown that smooth, non-monotonic activation functions can outperform ReLU variants in certain architectures, particularly in transformer models and natural language processing.

Gaussian Error Linear Unit (GELU)

GELU weights inputs by their magnitude, using the cumulative distribution function of the Gaussian distribution:

$$ \text{GELU}(z) = z \cdot \Phi(z) $$

Where \( \Phi(z) \) is the cumulative distribution function of the standard normal distribution. In practice, it’s often approximated as:

$$ \text{GELU}(z) \approx 0.5z \left(1 + \tanh\left[\sqrt{\frac{2}{\pi}} \left(z + 0.044715z^3\right)\right]\right) $$

GELU is the default activation function in BERT, GPT, and many other transformer models. Its smooth, probabilistic nature allows it to weight inputs by their value rather than gating them abruptly.

class GELUActivation:

def forward(self, z):

"""Forward pass through GELU (approximation)"""

self.z = z

# Approximation formula

cdf = 0.5 * (1.0 + np.tanh(

np.sqrt(2.0 / np.pi) * (z + 0.044715 * z**3)

))

self.output = z * cdf

return self.output

def backward(self, dL_da):

"""Backward pass - compute gradient (approximation)"""

z = self.z

# Derivative computation

cdf = 0.5 * (1.0 + np.tanh(

np.sqrt(2.0 / np.pi) * (z + 0.044715 * z**3)

))

pdf = np.exp(-0.5 * z**2) / np.sqrt(2.0 * np.pi)

dL_dz = dL_da * (cdf + z * pdf)

return dL_dz

# Visualize GELU behavior

gelu = GELUActivation()

z = np.linspace(-3, 3, 200)

a = gelu.forward(z)

plt.figure(figsize=(10, 6))

plt.plot(z, a, label='GELU', linewidth=2, color='purple')

plt.plot(z, relu.forward(z), label='ReLU', linewidth=2, alpha=0.6)

plt.grid(True, alpha=0.3)

plt.legend()

plt.xlabel('Input (z)')

plt.ylabel('Output')

plt.title('GELU vs ReLU')

plt.show()Swish activation function

Swish, discovered through neural architecture search, is defined as:

$$\text{Swish}(z) = z \cdot \sigma(\beta z)$$

Where \( \sigma \) is the sigmoid function and \( \beta \) is a learnable parameter (often set to 1). When \( \beta = 1 \), this is also called SiLU (Sigmoid Linear Unit).

Swish’s key properties:

- Smooth and non-monotonic

- Self-gated: the activation gates itself based on its own value

- Unbounded above, bounded below

- Approaches linear for large positive values

6. Specialized activation functions: Softmax and output layers

While most activation functions we’ve discussed are used in hidden layers, output layers often require specialized activation functions tailored to specific tasks.

Softmax for multi-class classification

Softmax converts a vector of real numbers into a probability distribution:

$$\text{softmax}(z_i) = \frac{e^{z_i}}{\sum_{j=1}^{K} e^{z_j}}$$

Where \( K \) is the number of classes. The outputs sum to 1 and can be interpreted as class probabilities.

Key properties:

- Differentiable everywhere

- Emphasizes the largest values (winner-takes-all behavior)

- Numerically stable with the log-sum-exp trick

class SoftmaxActivation:

def forward(self, z):

"""Forward pass through softmax with numerical stability"""

# Subtract max for numerical stability

exp_z = np.exp(z - np.max(z, axis=-1, keepdims=True))

self.output = exp_z / np.sum(exp_z, axis=-1, keepdims=True)

return self.output

def backward(self, dL_da):

"""Backward pass - compute gradient"""

# For categorical cross-entropy, this simplifies significantly

# General case is more complex

batch_size = dL_da.shape[0]

dL_dz = np.empty_like(dL_da)

for i in range(batch_size):

# Jacobian matrix for softmax

s = self.output[i].reshape(-1, 1)

jacobian = np.diagflat(s) - np.dot(s, s.T)

dL_dz[i] = np.dot(jacobian, dL_da[i])

return dL_dz

# Example: 3-class classification

logits = np.array([

[2.0, 1.0, 0.1], # Sample 1

[0.5, 2.5, 1.0], # Sample 2

[1.0, 1.0, 1.0] # Sample 3

])

softmax = SoftmaxActivation()

probabilities = softmax.forward(logits)

print("Logits:")

print(logits)

print("\nProbabilities (after softmax):")

print(probabilities)

print("\nSum of probabilities per sample:")

print(np.sum(probabilities, axis=1))Choosing activation functions by task

Different tasks require different output activations:

Binary classification: Sigmoid in the output layer produces a probability between 0 and 1. Use with binary cross-entropy loss.

Multi-class classification: Softmax in the output layer produces a probability distribution. Use with categorical cross-entropy loss.

Regression: Linear activation (no activation function) in the output layer allows unrestricted real-valued outputs. Use with mean squared error loss.

Multi-label classification: Sigmoid for each output independently allows multiple positive classes. Use with binary cross-entropy loss per label.

7. Practical guidelines for choosing activation functions

Selecting the right activation function depends on your network architecture, task, and computational constraints. Here’s a practical decision framework:

Default recommendations by architecture type

Convolutional neural networks (CNNs): Use ReLU or Leaky ReLU for hidden layers. ReLU remains the standard choice for image-related tasks due to its computational efficiency and strong empirical performance. Consider Leaky ReLU if you encounter dying neurons.

Recurrent neural networks (RNNs/LSTMs): Use tanh for hidden states and sigmoid for gates. These functions’ bounded output ranges help prevent exploding gradients in recurrent architectures.

Transformers and attention-based models: Use GELU or Swish for feed-forward layers. Modern language models consistently show that smooth activation functions work better in these architectures.

Fully connected deep networks: Use ReLU, GELU, or Swish depending on depth. For very deep networks (>20 layers), consider ELU or GELU to maintain better gradient flow.

Debugging activation-related issues

Training loss doesn’t decrease

- Check for dying ReLU: Switch to Leaky ReLU or ELU

- Verify output activation matches your loss function

- Inspect activation distributions to ensure they’re not saturated

Gradients explode or vanish

- For vanishing: Replace sigmoid/tanh with ReLU variants

- For exploding: Add gradient clipping or use bounded activations

- Consider batch normalization to stabilize activations

Slow convergence

- Try GELU or Swish for smoother optimization landscapes

- Ensure proper weight initialization for your chosen activation

- Experiment with learning rate schedules

# Diagnostic function to analyze activation health

def diagnose_activations(activations, layer_name):

"""Analyze activation statistics"""

print(f"\n{layer_name} Activation Analysis:")

print(f" Mean: {np.mean(activations):.4f}")

print(f" Std: {np.std(activations):.4f}")

print(f" Min: {np.min(activations):.4f}")

print(f" Max: {np.max(activations):.8. Knowledge Check

Quiz 1: Nonlinearity in neural networks

Question: Why are activation functions essential in neural networks, and what would happen if we built a deep neural network using only linear transformations?

Answer: Activation functions introduce nonlinearity into neural networks, which is critical for learning complex patterns. Without activation functions, even the deepest neural network would collapse into a simple linear model because the combination of multiple linear operations remains linear. This means the network could only learn linear relationships, like fitting straight lines, and would be unable to solve tasks requiring nonlinear decision boundaries such as image recognition or language understanding.

Quiz 2: The sigmoid activation function

Question: What is the mathematical formula for the sigmoid activation function, and what are its primary limitations in deep learning?

Answer: The sigmoid function is defined as \(\sigma(z) = \frac{1}{1 + e^{-z}}\), mapping any input to a value between 0 and 1. Its main limitations are: vanishing gradients (derivatives approach zero for large or small inputs, slowing learning in deep networks), non-zero-centered outputs (always positive values cause zigzagging during gradient descent), and computational expense (exponential operations are slower than simpler alternatives like ReLU).

Quiz 3: ReLU revolution

Question: Explain why ReLU became the default activation function in deep learning and describe the “dying ReLU” problem.

Answer: ReLU (Rectified Linear Unit), defined as max(0, z), became dominant due to computational efficiency (6-10x faster than sigmoid), reduced vanishing gradients (constant gradient of 1 for positive inputs), and sparse activation (about 50% of neurons output zero). The dying ReLU problem occurs when a neuron’s weights update such that its input is always negative, causing it to always output zero with zero gradient, making the neuron permanently inactive and unable to recover.

Quiz 4: Tanh vs sigmoid

Question: How does the tanh activation function differ from sigmoid, and why is it often preferred for hidden layers?

Answer: Tanh maps inputs to values between -1 and 1 \(formula: tanh(z) = (e^z – e^(-z))/(e^z + e^(-z))\), while sigmoid maps to 0 and 1. Tanh is preferred for hidden layers because its outputs are zero-centered, which doesn’t bias gradients in one direction during training. It also has stronger gradients (derivatives range from 0 to 1) compared to sigmoid’s weaker gradients (0 to 0.25), though both still suffer from vanishing gradients in deep networks.

Quiz 5: Leaky ReLU solution

Question: What problem does Leaky ReLU solve compared to standard ReLU, and what is its mathematical formulation?

Answer: Leaky ReLU solves the dying ReLU problem by introducing a small slope for negative values instead of zeroing them out. Its formula is: LeakyReLU(z) = z if z > 0, or αz if z ≤ 0, where α is typically 0.01 or 0.1. This small negative slope ensures gradients always flow even for negative inputs, preventing neurons from becoming permanently inactive while maintaining most of ReLU’s computational benefits.

Quiz 6: GELU in transformers

Question: What is GELU (Gaussian Error Linear Unit), and why has it become the standard activation function in transformer models like BERT and GPT?

Answer: GELU weights inputs by their magnitude using the cumulative distribution function of the Gaussian distribution: GELU(z) = z · Φ(z). It’s the default in transformer models because its smooth, probabilistic nature allows it to weight inputs by value rather than gating them abruptly like ReLU. This smooth activation creates better optimization landscapes and has consistently shown superior empirical performance in natural language processing tasks compared to ReLU variants.

Quiz 7: Softmax for classification

Question: Describe the softmax activation function and explain why it’s used specifically in the output layer for multi-class classification tasks.

Answer: Softmax converts a vector of real numbers into a probability distribution using the formula: \(softmax(z_i) = e^(z_i) / Σ(e^(z_j))\). It’s used for multi-class classification because it ensures all outputs sum to 1 and can be interpreted as class probabilities. Each output represents the probability that the input belongs to that particular class, making it ideal for tasks where we need to select one class from multiple mutually exclusive options.

Quiz 8: ELU characteristics

Question: What distinguishes the Exponential Linear Unit (ELU) from ReLU and Leaky ReLU, and what advantages does it offer?

Answer: ELU uses an exponential function for negative values: ELU(z) = z if z > 0, or α(e^z – 1) if z ≤ 0. Unlike ReLU’s hard threshold and Leaky ReLU’s linear negative slope, ELU has smooth gradients everywhere. This pushes mean activations closer to zero (accelerating learning), reduces optimization difficulties through smoothness, and produces negative outputs that make representations more robust, though at higher computational cost than ReLU.

Quiz 9: Swish activation properties

Question: Define the Swish activation function and explain what makes it “self-gated” compared to traditional activation functions.

Answer: Swish is defined as Swish(z) = z · σ(βz), where σ is the sigmoid function and β is typically 1 (also called SiLU). It’s “self-gated” because the activation gates itself based on its own value—the sigmoid of the input modulates the input itself. This creates a smooth, non-monotonic function that’s unbounded above, bounded below, and approaches linear behavior for large positive values, often outperforming ReLU in deep networks.

Quiz 10: Choosing activation functions by task

Question: What activation functions should be used in the output layer for binary classification, multi-class classification, and regression tasks, and why?

Answer: For binary classification, use sigmoid in the output layer because it produces a probability between 0 and 1, paired with binary cross-entropy loss. For multi-class classification, use softmax to produce a probability distribution over all classes, paired with categorical cross-entropy. For regression, use linear activation (no activation function) to allow unrestricted real-valued outputs, paired with mean squared error loss. The choice depends on the nature and range of the target output.