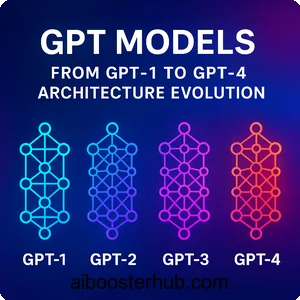

GPT Models: From GPT-1 to GPT-4 Architecture Evolution

The landscape of artificial intelligence has been fundamentally transformed by the emergence of GPT models. These generative pre-trained transformers have revolutionized how machines understand and generate human language. They now power everything from chatbots to content creation tools. Understanding the evolution of GPT architecture provides crucial insights into the rapid advancement of language models and their capabilities.

Content

Toggle1. What is GPT and the foundation of generative pre-trained transformers

Understanding the GPT model concept

GPT stands for Generative Pre-trained Transformer. It represents a family of language models developed by OpenAI that leverage the transformer architecture for natural language processing tasks. At its core, a GPT model is an autoregressive language model. It predicts the next token in a sequence based on the preceding context. Unlike traditional bidirectional models, GPT processes text in a left-to-right manner. This makes it particularly effective for text generation tasks.

The “generative” aspect refers to the model’s ability to produce coherent text. “Pre-trained” indicates that these models undergo extensive training on massive text corpora before being fine-tuned for specific tasks. The transformer component provides the architectural foundation that enables efficient parallel processing. It also captures long-range dependencies in text.

The transformer architecture foundation

The transformer architecture forms the backbone of all GPT models. It was introduced in the seminal paper “Attention Is All You Need.” Unlike recurrent neural networks that process sequences sequentially, transformers utilize self-attention mechanisms. These mechanisms weigh the importance of different words in relation to each other, regardless of their position in the sequence.

The key innovation is the scaled dot-product attention mechanism, mathematically expressed as:

$$ \text{Attention}(Q, K, V) = \text{softmax}\left(\frac{QK^T}{\sqrt{d_k}}\right)V $$

Here, (Q), (K), and (V) represent query, key, and value matrices respectively. The variable \(d_k\) is the dimension of the key vectors. This mechanism allows the model to focus on relevant parts of the input when generating each output token.

Pre-training and fine-tuning paradigm

GPT models follow a two-stage training process. During pre-training, the model learns general language patterns. It does this by predicting the next word in billions of sentences from diverse text sources. This unsupervised learning phase enables the model to capture grammar, facts, and reasoning abilities. It even acquires some world knowledge.

The pre-training objective is to maximize the likelihood of the training data:

$$ L_1(\mathcal{U}) = \sum_i \log P(u_i | u_{i-k}, \ldots, u_{i-1}; \Theta) $$

In this formula, \(\mathcal{U}\) is an unsupervised corpus of tokens. The variable (k) represents the context window size, and \(\Theta\) represents the model parameters. After pre-training, the model can be fine-tuned on specific downstream tasks with much smaller labeled datasets. This demonstrates remarkable transfer learning capabilities.

2. GPT-1: The pioneering architecture

Architecture and training approach

The original GPT model marked a significant milestone in demonstrating the effectiveness of pre-training transformer decoders. OpenAI introduced this model for natural language understanding. GPT-1 utilized a 12-layer transformer decoder with 117 million parameters. It featured 768-dimensional hidden states and 12 attention heads.

Researchers trained the model on the BooksCorpus dataset. This dataset contained approximately 7,000 unique unpublished books across various genres. The total text amounted to around 800 million words. This diverse training data helped the model learn rich linguistic patterns and contextual representations.

Here’s a simplified implementation of the core attention mechanism used in GPT-1:

import numpy as np

def scaled_dot_product_attention(Q, K, V, mask=None):

"""

Compute scaled dot-product attention.

Args:

Q: Query matrix of shape (batch_size, seq_len, d_k)

K: Key matrix of shape (batch_size, seq_len, d_k)

V: Value matrix of shape (batch_size, seq_len, d_v)

mask: Optional mask to prevent attention to future positions

Returns:

Output and attention weights

"""

d_k = Q.shape[-1]

# Compute attention scores

scores = np.matmul(Q, K.transpose(-2, -1)) / np.sqrt(d_k)

# Apply mask if provided (for causal attention)

if mask is not None:

scores = scores + (mask * -1e9)

# Apply softmax to get attention weights

attention_weights = np.exp(scores) / np.sum(np.exp(scores), axis=-1, keepdims=True)

# Compute weighted sum of values

output = np.matmul(attention_weights, V)

return output, attention_weights

Key innovations and limitations

GPT-1 introduced several key innovations that would become standard in subsequent language models. The use of learned position embeddings allowed the model to understand word order. Meanwhile, byte-pair encoding (BPE) tokenization enabled efficient handling of rare words and subword units.

A single task-agnostic architecture could achieve strong performance across multiple NLP tasks through fine-tuning. This was demonstrated with textual entailment, question answering, semantic similarity, and text classification. This versatility was groundbreaking. Previous approaches typically required task-specific architectures.

However, GPT-1 had notable limitations. With only 117 million parameters, its knowledge capacity was limited compared to later models. Sometimes the model struggled with maintaining long-range coherence in generated text. It could produce repetitive or inconsistent outputs. Additionally, the training corpus was relatively small by modern standards, despite being diverse.

3. GPT-2: Scaling up and zero-shot learning

Architectural improvements and scale

GPT-2 represented a significant leap in scale and capability. It expanded to 1.5 billion parameters in its largest variant. The architecture maintained the same fundamental design as GPT-1 but scaled dramatically. It featured 48 layers and 1600-dimensional hidden states. Training was performed on a substantially larger and more diverse dataset called WebText.

WebText consisted of approximately 40GB of text data. Researchers scraped this data from outbound links on Reddit with at least 3 karma. The dataset totaled about 8 million documents. This curation strategy aimed to capture higher-quality content compared to arbitrary web scraping.

The model introduced layer normalization improvements and modified initialization schemes. These changes enabled stable training at scale. The context window was expanded to 1024 tokens. This allowed the model to maintain coherence over longer passages.

Zero-shot task transfer capabilities

Perhaps the most significant contribution of GPT-2 was demonstrating that language models could perform various tasks without explicit fine-tuning. This capability is known as zero-shot learning. By simply providing task instructions or examples in the prompt, GPT-2 could perform translation, summarization, and question answering. It did this without any gradient updates.

This emergent behavior suggested something important. With sufficient scale and diverse training data, language models develop general-purpose capabilities. These capabilities can be directed through natural language prompts. GPT-2 achieved competitive performance on several benchmarks without task-specific training. This challenged the prevailing paradigm that required fine-tuning for each new task.

Here’s an example of how GPT-2 processes input for text generation:

import torch

import torch.nn as nn

class GPT2Block(nn.Module):

"""A single transformer block in GPT-2"""

def __init__(self, hidden_size, num_heads, dropout=0.1):

super().__init__()

self.ln_1 = nn.LayerNorm(hidden_size)

self.attn = nn.MultiheadAttention(hidden_size, num_heads, dropout=dropout)

self.ln_2 = nn.LayerNorm(hidden_size)

self.mlp = nn.Sequential(

nn.Linear(hidden_size, 4 * hidden_size),

nn.GELU(),

nn.Linear(4 * hidden_size, hidden_size),

nn.Dropout(dropout)

)

def forward(self, x, mask=None):

# Apply layer norm, then self-attention with residual connection

attn_output, _ = self.attn(

self.ln_1(x),

self.ln_1(x),

self.ln_1(x),

attn_mask=mask

)

x = x + attn_output

# Apply layer norm, then MLP with residual connection

x = x + self.mlp(self.ln_2(x))

return x

Public impact and ethical considerations

GPT-2 garnered significant public attention. This was partly due to OpenAI’s staged release approach based on concerns about potential misuse. The model’s ability to generate coherent, human-like text raised important questions. These included concerns about synthetic content, misinformation, and the broader societal impact of powerful language models.

The model demonstrated both impressive capabilities and concerning limitations. While it could generate remarkably fluent text, it sometimes produced factually incorrect information. It exhibited biases present in its training data. Occasionally it generated harmful or inappropriate content. These challenges highlighted the importance of responsible AI development and deployment.

4. GPT-3: The emergence of few-shot learning

Massive scale and architectural refinements

GPT-3 marked an unprecedented leap in model scale. It featured 175 billion parameters in its largest variant. This was more than 100 times larger than GPT-2. The architecture maintained the core transformer decoder design but implemented several optimizations. These enabled training at this scale, including sparse attention patterns in some variants and improved mixed-precision training techniques.

Researchers trained the model on a diverse mixture of datasets. The total training data amounted to approximately 570GB of text. This included Common Crawl (filtered), WebText2, Books1, Books2, and Wikipedia. This massive training corpus enabled GPT-3 to develop extensive world knowledge and sophisticated reasoning capabilities.

The training utilized alternating dense and locally banded sparse attention patterns in the larger variants. This allowed efficient processing of longer contexts while managing computational costs. The model featured 96 layers, 12,288-dimensional hidden states, and 96 attention heads in its full version.

Few-shot and in-context learning

GPT-3’s most remarkable capability was its few-shot learning performance. The model could adapt to new tasks by simply providing a few examples in the prompt. It did this without any gradient updates. This in-context learning demonstrated that the model could recognize patterns and apply them to new instances purely through prompt engineering.

The few-shot learning paradigm works by conditioning the model on task demonstrations:

$$ P(\text{output} | \text{task description}, \text{examples}, \text{input}; \Theta) $$

In this approach, the model uses the provided context to infer the task structure and generate appropriate outputs. This capability emerged from scale. It suggested that larger models develop meta-learning abilities during pre-training.

Here’s a practical example of few-shot prompting with GPT-3:

def create_few_shot_prompt(task_description, examples, new_input):

"""

Create a few-shot learning prompt for GPT-3

Args:

task_description: String describing the task

examples: List of (input, output) tuples

new_input: The new input to process

Returns:

Formatted prompt string

"""

prompt = f"{task_description}\n\n"

# Add examples

for i, (input_text, output_text) in enumerate(examples, 1):

prompt += f"Example {i}:\n"

prompt += f"Input: {input_text}\n"

prompt += f"Output: {output_text}\n\n"

# Add new input

prompt += f"Now complete this:\n"

prompt += f"Input: {new_input}\n"

prompt += f"Output:"

return prompt

# Example usage for sentiment analysis

task_desc = "Classify the sentiment of the following text as positive or negative."

examples = [

("This movie was fantastic! I loved every minute.", "Positive"),

("Terrible experience, would not recommend.", "Negative"),

("An absolute masterpiece of cinema.", "Positive")

]

new_text = "The product broke after one day of use."

prompt = create_few_shot_prompt(task_desc, examples, new_text)

print(prompt)

Commercial applications and ChatGPT

GPT-3 became the foundation for numerous commercial applications. OpenAI provided API access that enabled developers to build products leveraging the model’s capabilities. This democratization of access to powerful language models sparked innovation across industries. Applications ranged from content creation and customer service to code generation and education.

The most impactful application of GPT-3 architecture was ChatGPT. It combined the base model with reinforcement learning from human feedback (RLHF). This created a conversational AI assistant. ChatGPT demonstrated how instruction-following and alignment techniques could make large language models more useful, safe, and aligned with user intentions.

The RLHF training process involves three stages:

$$ \text{Reward Model: } r_\phi(x, y) = \text{score}(x, y; \phi) $$

$$\text{Policy Optimization: }

\max_{\theta} \; \mathbb{E}_{(x,y) \sim \pi_{\theta}}[r_{\phi}(x, y)]

– \beta \cdot D_{\mathrm{KL}}[\pi_{\theta} \,||\, \pi_{\text{ref}}]$$

Here, \(r_\phi\) is the reward model, and \(\pi_\theta\) is the policy being optimized. The term \(\pi_{\text{ref}}\) is the reference model, and \(\beta\) controls the deviation from the reference policy.

5. GPT-4: Multimodal capabilities and enhanced reasoning

Architectural advances and multimodal integration

GPT-4 represents the current frontier of the GPT model family. It incorporates significant architectural improvements and multimodal capabilities that extend beyond pure text processing. While OpenAI has not disclosed the exact parameter count, the model demonstrates substantially improved performance across diverse tasks. It excels particularly in reasoning, coding, and complex problem-solving.

The most significant innovation in GPT-4 is its ability to process both text and image inputs. This makes it a truly multimodal model. This capability enables applications ranging from visual question answering to document understanding with embedded images, charts, and diagrams. The multimodal integration required sophisticated architectural modifications to align vision and language representations.

GPT-4 also features an extended context window. Variants support up to 32,768 tokens, which is approximately 25,000 words. This enables the model to process and reason over substantially longer documents than previous versions. This extended context dramatically improves performance on tasks requiring comprehensive document understanding.

Enhanced reasoning and reduced hallucinations

GPT-4 demonstrates marked improvements in complex reasoning tasks. These include mathematical problem-solving, logical inference, and multi-step planning. The model achieves significantly higher scores on standardized tests and professional examinations compared to GPT-3. It often performs at human expert levels.

A critical improvement in GPT-4 is the reduction in factual errors and hallucinations. Hallucinations are instances where the model generates plausible-sounding but incorrect information. Through improved training techniques, GPT-4 is more reliable in producing factually accurate responses. This includes more sophisticated RLHF procedures and adversarial testing.

The model’s enhanced reasoning can be illustrated through chain-of-thought prompting:

def chain_of_thought_prompt(problem, encourage_reasoning=True):

"""

Create a chain-of-thought prompt to encourage step-by-step reasoning

Args:

problem: The problem statement

encourage_reasoning: Whether to explicitly request reasoning steps

Returns:

Formatted prompt that encourages detailed reasoning

"""

prompt = f"Problem: {problem}\n\n"

if encourage_reasoning:

prompt += "Let's solve this step by step:\n"

prompt += "1. First, let's identify what we know:\n"

prompt += "2. Next, let's determine what we need to find:\n"

prompt += "3. Now, let's work through the solution:\n"

prompt += "4. Finally, let's verify our answer:\n\n"

return prompt

# Example with a math problem

math_problem = """

A train travels from City A to City B at 60 mph and returns at 40 mph.

If the total travel time is 5 hours, what is the distance between the cities?

"""

prompt = chain_of_thought_prompt(math_problem)

print(prompt)

Safety improvements and alignment

GPT-4 incorporates substantial safety improvements. It is more resistant to adversarial attacks, jailbreaking attempts, and requests for harmful content. Before deployment, the model underwent extensive red-teaming and adversarial testing. Particular attention was given to reducing toxic outputs, bias, and potential misuse scenarios.

The alignment process for GPT-4 involved domain expert feedback. Experts from areas like medicine, law, and education contributed to improve factual accuracy. They also helped improve appropriate behavior in specialized contexts. This targeted feedback helped the model better understand when to express uncertainty and when to refuse inappropriate requests.

6. Comparing GPT architectures and technical evolution

Parameter scaling and computational requirements

The evolution from GPT-1 to GPT-4 demonstrates a clear trend toward larger models with more parameters. Each generation requires exponentially more computational resources for training. GPT-1’s 117 million parameters pale in comparison to GPT-3’s 175 billion. This represents an increase of approximately 1,500 times.

This scaling follows empirical power laws observed in language models:

$$ L(N) = \left(\frac{N_c}{N}\right)^{\alpha_N} $$

In this equation, \(L(N)\) is the loss given \(N\) parameters. The term \(N_c\) is a constant, and \(\alpha_N\) is the scaling exponent. Research suggests that model performance improves predictably with scale, though with diminishing returns.

The computational cost of training these models has grown correspondingly. While GPT-1 could be trained on a modest GPU cluster, GPT-3 required thousands of high-end GPUs running for weeks. This consumed millions of dollars in computing resources. GPT-4’s training likely required even more substantial infrastructure.

Capability emergence across model sizes

An important phenomenon observed across GPT models is the emergence of new capabilities at certain scale thresholds. Abilities like few-shot learning, complex reasoning, and instruction-following appear to emerge somewhat unpredictably as models grow larger. This suggests that scale unlocks qualitatively new behaviors rather than simply improving existing ones.

Here’s a comparison of key architectural features:

gpt_models_comparison = {

"GPT-1": {

"parameters": "117M",

"layers": 12,

"hidden_size": 768,

"attention_heads": 12,

"context_length": 512,

"training_data": "~5GB (BooksCorpus)",

"key_capability": "Transfer learning through fine-tuning"

},

"GPT-2": {

"parameters": "1.5B (largest)",

"layers": 48,

"hidden_size": 1600,

"attention_heads": 25,

"context_length": 1024,

"training_data": "~40GB (WebText)",

"key_capability": "Zero-shot task transfer"

},

"GPT-3": {

"parameters": "175B",

"layers": 96,

"hidden_size": 12288,

"attention_heads": 96,

"context_length": 2048,

"training_data": "~570GB (diverse sources)",

"key_capability": "Few-shot in-context learning"

},

"GPT-4": {

"parameters": "Undisclosed (estimated >1T)",

"layers": "Undisclosed",

"hidden_size": "Undisclosed",

"attention_heads": "Undisclosed",

"context_length": "8K-32K",

"training_data": "Undisclosed (multimodal)",

"key_capability": "Multimodal reasoning & enhanced safety"

}

}

# Function to display comparison

def display_model_comparison(models_dict):

for model_name, specs in models_dict.items():

print(f"\n{model_name}:")

print("-" * 50)

for key, value in specs.items():

print(f" {key.replace('_', ' ').title()}: {value}")

display_model_comparison(gpt_models_comparison)

Training efficiency and optimization techniques

Beyond simple parameter scaling, each generation of GPT models has incorporated increasingly sophisticated training techniques. These include mixed-precision training, gradient checkpointing, model parallelism, and improved optimization algorithms. All of these enable efficient training at scale.

Modern GPT models employ various parallelism strategies:

- Data parallelism: Splitting training data across multiple GPUs

- Model parallelism: Distributing model layers across devices

- Pipeline parallelism: Processing different micro-batches at different stages simultaneously

- Tensor parallelism: Splitting individual operations across accelerators

These techniques have been essential for making the training of massive models computationally feasible. However, they introduce additional complexity in implementation and coordination.

7. Future directions and implications for AI development

Emerging trends in language model research

The evolution of GPT models points toward several important trends in language model research. Multimodal integration, as demonstrated in GPT-4, is likely to expand further. This will incorporate video, audio, and other data modalities. This convergence toward unified models that can process and generate multiple types of content represents a significant step toward more general artificial intelligence.

Efficiency improvements are another crucial direction. While scaling has driven much of the progress in GPT models, researchers are increasingly focused on achieving similar capabilities with fewer parameters. They also aim to reduce computational cost. Techniques like knowledge distillation, sparse models, and efficient attention mechanisms aim to make powerful language models more accessible and sustainable.

Another important trend is improved controllability and alignment. Future iterations will likely feature better mechanisms for ensuring models behave according to user intentions and societal values. Expectations include reduced hallucinations and more robust safety properties. Better uncertainty quantification and explicit reasoning capabilities will be part of this development.

Impact on the AI landscape and applications

GPT models have fundamentally transformed the AI landscape. They demonstrate that large-scale pre-training on diverse text data can produce models with broad capabilities. These capabilities are applicable to numerous downstream tasks. This paradigm shift has influenced research across domains, from computer vision to robotics. It has inspired similar approaches in other fields.

The practical applications of GPT models continue to expand rapidly. From code generation and debugging to creative writing, education, and scientific research, these models are becoming integral tools across industries. The API-first approach pioneered by OpenAI has enabled countless applications and services built on GPT technology. This has created an entire ecosystem of AI-powered products.

However, challenges remain. The environmental cost of training massive models raises concerns. Questions about bias and fairness persist. Issues of intellectual property and attribution need resolution. The potential for misuse requires ongoing attention. The evolution of GPT models must be accompanied by responsible development practices. Robust governance frameworks and continued research into AI safety and alignment are essential.